Decoding the Universal Language: What is Unicode?

In our interconnected digital world, seamless communication across languages and platforms is not just a convenience; it's a necessity. Imagine a global internet where every character, symbol, and emoji you see could potentially render as garbled, unreadable text. This chaotic scenario was once a significant challenge, but thanks to Unicode, we now have a robust, universal standard for character encoding that underpins virtually all modern text processing. Our journey into the comprehensive Unicode table begins with understanding its fundamental purpose: to provide a unique numerical identity, or "code point," for every character across all known writing systems, historical scripts, and a vast array of symbols.

Before Unicode, the digital landscape was a fragmented mess of incompatible character sets. ASCII, suitable for English, was quickly overwhelmed by the demands of other languages. Regional code pages emerged, allowing support for specific language groups, but leading to "mojibake" (garbled text) when data moved between systems using different code pages. Unicode solved this by creating a single, unified character set, assigning a distinct code point (like U+0041 for the Latin capital letter 'A' or U+2764 for a heart emoji ❤️) to over a million possible characters. This monumental effort ensures that text created in one language or system can be correctly displayed and processed anywhere else, fostering true global interoperability.

The Vastness of the Unicode Table

The Unicode table is not merely a list; it's an extensive, meticulously organized map of human communication. It encompasses:

- Scripts from Around the World: Latin, Cyrillic, Greek, Arabic, Hebrew, Devanagari, Han (Chinese, Japanese, Korean), Thai, and hundreds more.

- Historical and Ancient Scripts: Hieroglyphs, Cuneiform, Runes, offering a digital bridge to the past.

- Mathematical and Technical Symbols: A comprehensive set for scientific and engineering notations.

- Punctuation and Diacritics: Ensuring proper linguistic representation.

- Eponymous Symbols and Emojis: From currency signs to the expressive visual language that has become integral to digital conversations.

This vast collection is structured into "planes," each containing 65,536 code points. The Basic Multilingual Plane (BMP), or Plane 0, holds the most commonly used characters. This systematic organization makes it possible to manage and retrieve specific characters efficiently, laying the groundwork for how computers process and display text.

Navigating the Maze of Encoding: UTF-8, UTF-16, and UTF-32

While Unicode defines what a character is, character encoding dictates how that character's code point is represented as a sequence of bytes for storage and transmission. This distinction is crucial. Without a consistent encoding scheme, even a perfect Unicode code point can turn into unreadable gibberish. The most prevalent encoding forms are UTF-8, UTF-16, and UTF-32, each with its own advantages and use cases.

- UTF-8: The Web's Lingua Franca. UTF-8 is a variable-width encoding, meaning different characters are represented by a different number of bytes (1 to 4 bytes per character). Its ingenious design makes it backward-compatible with ASCII (single-byte characters like 'A' are identical in both ASCII and UTF-8), which greatly facilitated its adoption. UTF-8's efficiency for English text and its ability to represent all Unicode characters has made it the dominant encoding on the web, in operating systems (like Linux), and for general data exchange.

- UTF-16: Windows and Java's Choice. UTF-16 is another variable-width encoding, using either two or four bytes per character. It's widely used internally by Windows operating systems and in programming environments like Java. While many common characters fit into two bytes, less common characters require "surrogate pairs" – two two-byte sequences to represent a single code point.

- UTF-32: Simple but Resource-Intensive. UTF-32 is a fixed-width encoding, using exactly four bytes for every single Unicode character. This simplicity makes character indexing straightforward, but it's often inefficient for storage and transmission, especially for languages predominantly using characters that could be represented by fewer bytes. Consequently, its use is less widespread than UTF-8 or UTF-16.

The choice of encoding is not trivial. An incorrect encoding interpretation can lead to data corruption, security vulnerabilities, and a broken user experience. It's paramount to ensure that the encoding used to save data matches the encoding used to read it.

The Pitfalls of Encoding Mismatch: "Broken Chinese" and Beyond

One of the most common and frustrating manifestations of an encoding mismatch is the appearance of "broken Chinese" or other forms of mojibake. This occurs when text encoded in one character set (e.g., an older, regional Chinese encoding like GBK) is mistakenly interpreted by a system expecting a different encoding (like UTF-8). The result is often a string of seemingly random, unreadable characters that bear no resemblance to the original text.

This issue is not confined to East Asian languages; it can happen with any text containing characters outside the basic ASCII range. Common scenarios include:

- Database Migrations: Moving data between databases with different default character sets.

- File Transfers: Opening a text file saved with one encoding in an editor configured for another.

- Web Forms and APIs: Data submitted or received without explicit encoding declarations.

- Legacy Systems Integration: Interacting with older systems that predate widespread Unicode adoption.

For developers, especially those working with internationalized applications, understanding and correctly handling these encoding challenges is critical. If you're a C# developer grappling with such issues, you might find valuable insights in Decoding Broken Chinese: A C# Developer's Guide to Unicode, which delves into practical strategies for identification and resolution.

Essential Tools for Unicode Management: Converters and Decoders

Given the complexity of character encodings and the potential for mismatches, specialized tools are indispensable for anyone working with digital text. A Unicode Text Converter: Essential Tool for UTF and Character Encoding is a prime example of such a utility, designed to streamline the process of understanding, debugging, and transforming text between various Unicode and legacy encodings.

These tools typically offer a range of functionalities:

- Encoding/Decoding: Convert text from a specific encoding (e.g., UTF-8, UTF-16, ISO-8859-1) to another, or to its raw hexadecimal byte representation, and vice-versa. This is invaluable for troubleshooting mojibake.

- Character Lookup: Allow users to input a character and see its Unicode code point, name, and various byte representations, or to input a code point and see the corresponding character.

- Hex to Text Conversion: Directly interpret hexadecimal byte sequences as text according to a chosen encoding, useful when dealing with raw data streams.

- URL Encoding/Decoding: Handle characters that need to be safely transmitted within URLs.

- HTML Entity Conversion: Convert special characters to their HTML entity equivalents (e.g., & for &) for web display.

Using a reliable Unicode text converter can save countless hours of debugging, helping developers and content creators ensure that their text is consistently displayed and processed correctly across all platforms and systems.

Practical Tips for Flawless Unicode Handling

- Always Declare Encoding: Explicitly specify the character encoding for all text content. This includes HTTP headers for web pages,

<meta>tags, file headers (like BOM for UTF-8), and database connection strings. - Standardize on UTF-8: For new projects, particularly web-based applications, make UTF-8 your default encoding. Its widespread support and efficiency make it the most future-proof choice.

- Validate Input and Output: Implement robust validation to ensure that incoming data is in the expected encoding and that outgoing data is correctly encoded before storage or transmission.

- Be Aware of Defaults: Understand the default character encoding used by your programming languages, frameworks, operating systems, and databases, as these can vary significantly.

- Test Early and Often: Incorporate multilingual test data early in your development cycle to catch encoding issues before they become critical problems.

Beyond the Basics: Advanced Unicode Concepts

The world of Unicode extends far beyond basic character representation. For truly global and robust applications, several advanced concepts come into play:

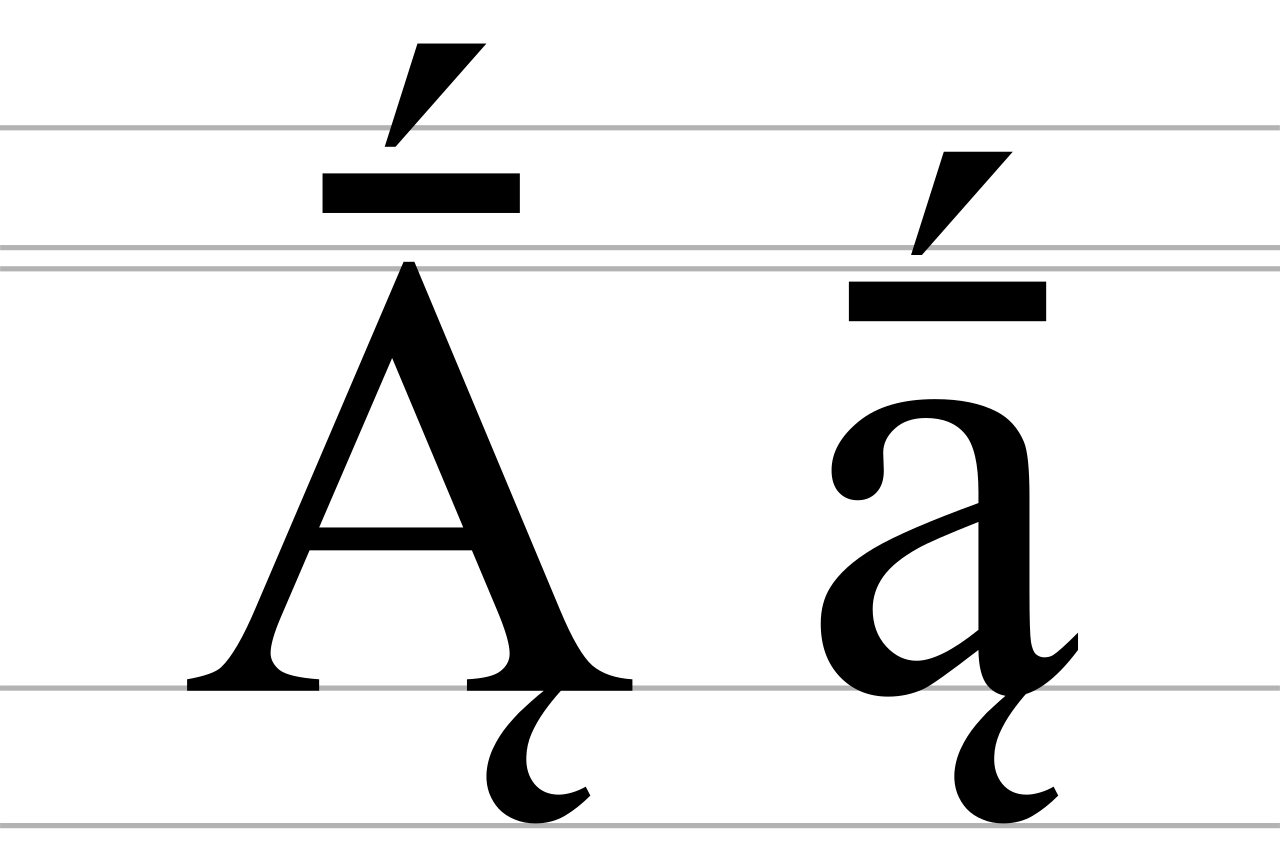

- Normalization: Characters can often be represented in multiple ways (e.g., 'é' can be a single code point or 'e' followed by a combining accent). Unicode defines normalization forms (NFC, NFD) to ensure consistent comparison and processing of text.

- Grapheme Clusters: What a user perceives as a single "character" (a grapheme) might actually be composed of multiple Unicode code points (e.g., a base character plus combining diacritics, or an emoji sequence like a family). Handling these correctly is vital for text manipulation and display.

- Collation: The rules for sorting and comparing text vary dramatically by language. Unicode provides algorithms and data for locale-sensitive collation, ensuring that "zebra" comes after "yak" even in languages with complex sorting rules.

- Directionality (BiDi): For languages like Arabic and Hebrew, text flows from right to left. Unicode includes algorithms to handle bidirectional text, allowing correct display of mixed left-to-right and right-to-left scripts.

Conclusion

Exploring the comprehensive Unicode table reveals a marvel of international standardization – an invisible backbone supporting the vast majority of digital communication today. From understanding its fundamental purpose to navigating the intricacies of encoding forms like UTF-8, UTF-16, and UTF-32, the journey highlights the critical role Unicode plays in preventing "broken text" and enabling truly global applications. By leveraging powerful conversion tools and adhering to best practices in encoding management, developers and content creators can ensure their digital messages are clear, consistent, and universally understood. In an increasingly interconnected world, a thorough grasp of Unicode isn't just a technical skill; it's a foundation for seamless, respectful, and effective communication across all linguistic and cultural boundaries.